Why Calendar-Based Inspections Can’t Keep Up With Fast Failure Modes

The Assumption Behind Calendar-Based Inspection Programs

Most reliability programs rely on calendar-based inspections for a simple reason: they are predictable, auditable, and easy to plan. Walkdowns happen weekly. Thermal scans happen monthly. Checklists get completed. Findings get logged.

On paper, this looks like coverage.

In practice, many failures still arrive as surprises. Bearings seize between rounds. Belts fail days after a clean inspection. Electrical cabinets overheat without prior flags.

When this happens, the instinctive response is often to question execution. Were inspections rushed? Was something overlooked?

More often, the issue is structural. Calendar-based inspections were never designed to catch failures that develop faster than the inspection cycle. Calendar-based inspections miss fast failure modes because they only show asset condition at fixed moments in time, not how it changes between rounds.

The Actual Problem: Inspection Frequency Is Not Coverage

Inspection programs are often evaluated by how often assets are checked. Weekly inspections are assumed to provide better coverage than monthly ones. Increasing frequency feels like reducing risk.

But frequency and coverage are not the same thing. Increasing inspection frequency does not eliminate blind spots created between inspection rounds.

Inspections provide snapshots. They show asset condition at a specific moment in time. Anything that changes between inspections is invisible by design.

Fast-developing failure modes exploit this gap. Degradation can initiate, accelerate, and reach failure thresholds entirely between scheduled rounds, regardless of how disciplined the inspection process is.

This is not a failure of inspection quality. It is a limitation of the inspection model itself.

Fast Failure Modes Calendar-Based Inspections Routinely Miss

Not all failures evolve slowly. Many develop under operating conditions that inspections do not capture. These failures are not invisible because inspections are poorly executed, but because they develop under conditions inspections are not present to observe.

Common examples include:

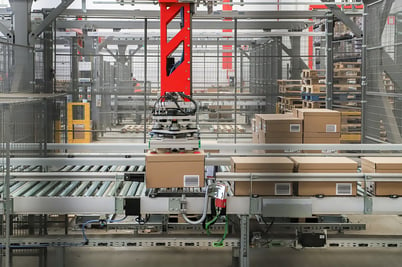

- Conveyors bearings that overheat under sustained load but appear normal when idle during inspections

- Belts that degrade rapidly due to misalignment or tension changes introduced after an inspection

- Electrical cabinets that remain within limits under normal conditions, then deteriorate quickly during peak demand

In each case, inspections may show a healthy asset shortly before failure. The issue is not that warning signs were ignored. The issue is that those signs only emerged between rounds.

Why Between-Rounds Degradation Creates Hidden Blind Spots

Calendar-based inspections create blind spots that teams often underestimate. Between-rounds degradation refers to condition changes that occur entirely between scheduled inspection intervals.

Once an inspection is completed, there is an implicit assumption of coverage until the next round. This assumption holds only if degradation progresses slowly and predictably.

In modern operations with high duty cycles, variable loads, and extended run times, that assumption no longer holds..

Degradation may:

- Initiate under specific operating conditions

- Escalate during sustained load

- Disappear temporarily when conditions normalize

Inspections capture none of this behavior. The blind spot is not obvious because the inspection program appears thorough on paper.

Why This Is Not a Labor or Discipline Problem

When inspections miss failures, the response is often to increase rigor: More checklists, more sign-offs, more accountability. But this rarely solves the underlying issue.

Inspection programs can be executed perfectly and still miss fast-developing failures. No amount of diligence can compensate for gaps that exist between inspection intervals.

This is not about technicians failing to look closely enough. It is about relying on a method that assumes degradation will wait to be observed. Improving inspection discipline does not address the time gaps where fast failure modes develop.

The Limits of Calendar Logic in Modern Operations

Calendar-based inspections were designed for environments where assets operated under relatively stable conditions. Degradation progressed slowly. Time was a reasonable proxy for risk.

Modern operations do not behave this way.

High utilization, fluctuating demand, and compressed access windows mean that risk is no longer evenly distributed across time. Failures are increasingly tied to operating conditions rather than elapsed days.

When inspections are tied to the calendar instead of behavior, fast failure modes will continue to slip through unnoticed.

What This Means for Reliability Programs

Inspection programs are still valuable. They catch visible wear, confirm known issues, and support compliance requirements. But what they cannot do is provide continuous coverage for failures that develop quickly or intermittently.

Treating inspections as sufficient coverage creates a false sense of security. The absence of findings is interpreted as asset health, even when degradation is actively progressing out of sight.

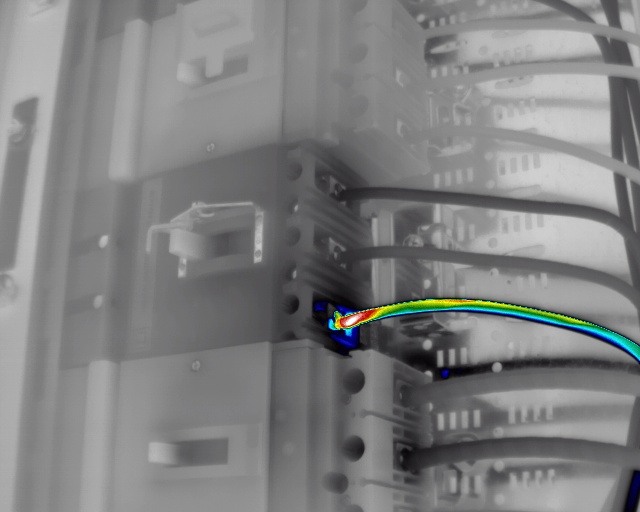

See it in action: This multinational retailer caught a router discharge thanks to MSAI 👇

Rethinking Inspection Assumptions

The question reliability teams need to ask is not whether inspections are being performed often enough.

Instead, ask yourself: “Can my inspections see the failures that matter most?”

As failures develop faster and operating conditions change more often, inspection programs alone struggle to keep pace with how degradation actually unfolds.

That gap, left unexamined, is where surprises originate.

Looking for a way to keep up with fast failure modes? Talk to an expert.

Turn early signals into uptime.

Book a working session with one of our condition-based monitoring experts, and we’ll review your assets, assess your maintenance maturity, and show how multi-sensor monitoring catches issues hours, days, or weeks earlier than manual rounds - giving you a clear path to fast, measurable ROI.